True real-time lead scoring with HubSpot Professional requires complex webhook implementations, public endpoints, and queuing systems. The infrastructure costs alone run $200-500 monthly, plus significant development time for reliability and security.

Here’s how to achieve near real-time scoring updates with 95% of the benefits and 5% of the complexity.

Implement near real-time scoring updates without webhook complexity using Coefficient

Coefficient provides a practical near real-time solution through automated hourly syncs. Instead of building webhook infrastructure, you can update thousands of lead scores automatically with 60-90 minute maximum latency, which is sufficient for most B2B use cases since leads rarely convert within minutes.

How to make it work

Step 1. Set up filtered imports for recent activity.

Configure Coefficient to import contacts with “Last Modified Date > [1 hour ago]” filter. This pulls only contacts with recent activity changes, keeping your dataset focused on leads that need score updates.

Step 2. Apply scoring logic automatically.

Use spreadsheet formulas to calculate updated scores, or set up IMPORTRANGE to pull results from your Python model output. Create scoring formulas likefor immediate calculation.

Step 3. Implement smart update logic.

Add conditional formulas to only push updates when scores change significantly:. This prevents unnecessary API calls and focuses on meaningful changes.

Step 4. Schedule automatic exports to HubSpot.

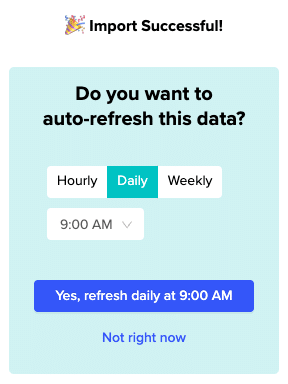

Configure exports to UPDATE HubSpot custom score properties every hour. Coefficient handles batch processing efficiently, managing rate limits and retry logic automatically without hitting the 100 requests per 10 seconds limit.

Step 5. Monitor update performance.

Set up Slack or email alerts when exports complete or encounter errors. Track how many scores update each hour and monitor the time between lead activity and score updates to ensure your near real-time system performs as expected.

Achieve practical real-time scoring

Skip the webhook complexity and infrastructure costs while still delivering timely lead score updates. Coefficient’s automated hourly sync approach provides 95% of real-time value with minimal setup and zero maintenance. Start your free trial and implement near real-time scoring today.