Filtering Activity reports by User custom fields on Salesforce dashboards is problematic because Activity objects don’t expose related User fields in dashboard filter contexts, even when these fields are available in the underlying reports.

Native workarounds like formula fields or custom report types don’t consistently resolve field visibility issues. Here’s a complete solution that works reliably.

Get robust filtering capabilities for activity reports using user custom fields with Coefficient

Coefficient provides robust filtering capabilities for Activity reports using User custom fields by importing Task and Event records with User relationship fields included directly.

How to make it work

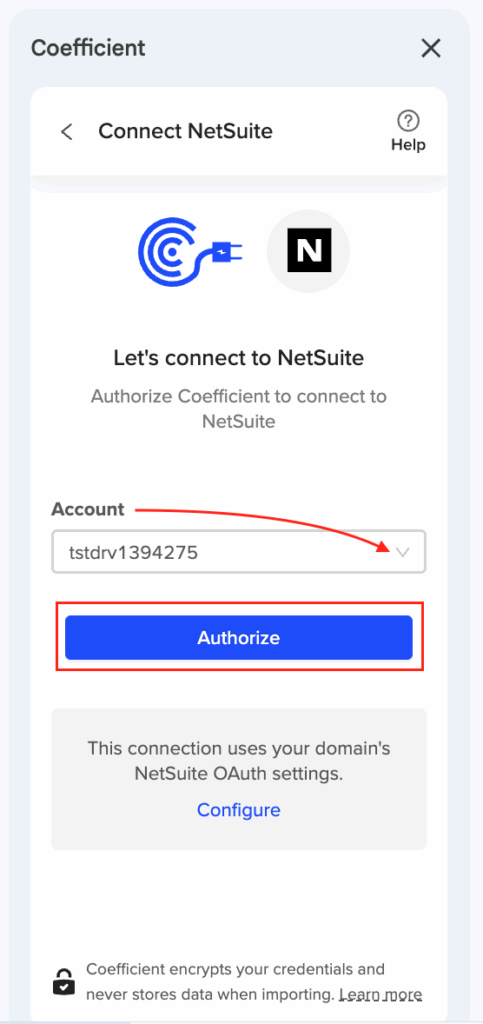

Step 1. Import Activity data with User relationship fields.

Use Coefficient’s Salesforce connector to pull Task and Event records. Include User relationship fields like “Sales_Region__c (Owner)”, “Department__c (Owner)”, or “Territory__c (Owner)” to access all the User custom fields you need for filtering.

Step 2. Create dynamic filter controls in your spreadsheet.

Set up dropdown filters or input cells that reference User custom fields directly. These filters can use AND/OR logic for complex combinations, reference cell values for easy stakeholder control, and combine multiple User custom fields simultaneously.

Step 3. Build interactive dashboards with advanced filtering.

Create pivot tables, charts, and summary views with full filtering functionality. Filter by Sales Region and Department together, use date ranges for Activity dates, and create dynamic filters that update when stakeholders change criteria.

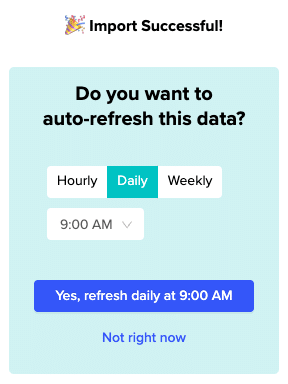

Step 4. Schedule automatic updates to maintain current data.

Set up hourly, daily, or weekly refresh schedules to keep your Activity and User data current. Your filtering setup remains intact while the underlying data updates automatically from Salesforce.

Get flexible filtering without platform restrictions

This approach eliminates the inconsistent field availability issues in native Salesforce dashboard filters while providing more flexible filtering options than the platform allows. Start building better activity reports with reliable User field filtering.